Image Classification: An Overview

Thanks to IoT and AI becoming ubiquitous technologies, we now have more user and trend data than ever. Varying in form, data could be text, image, speech, or a mix of these. Images now constitute a part of user data more prominently than ever.

However, the image data that we have, is unstructured and requires advanced methods like deep learning models to analyze it. Arguably the most crucial part of digital image analysis, image classification today, uses AI systems based on deep learning models to achieve better and more accurate results.

Image Classification

Image classification involves categorizing an image under preset labels or land cover themes. The end goal here is to extract information from images and categorize it in a class (e.g., dog/cat) or a probability (e.g., there is an 85% chance that this is an elephant).

While a person can naturally classify images, one might wonder how a computer learns to do that. The answer is, using Convolutional Neural Networks (CNN). A CNN is a framework built using concepts of machine learning.

CNNs are capable of learning from data on their own, without human intervention. In fact, there is only a small amount of preprocessing required when working with CNNs. CNNs create and adapt their own image filters; these image filters have to be specifically coded for a majority of algorithms and models These frameworks have a set of layers that perform specific functions to enable the CNN to perform these functions.

Demystifying CNN Layers

The basic unit of a CNN is neuron that are modeled after human neurons. Neurons are mathematical functions that computed weighted average of inputs and apply an activation function on the weighted average generated. A cluster of neurons is called a layer. Each layer typically has a specific function.

A CNN model may have anywhere between three to 150 or even more layers. The output of one layer acts as an input for the next layer.

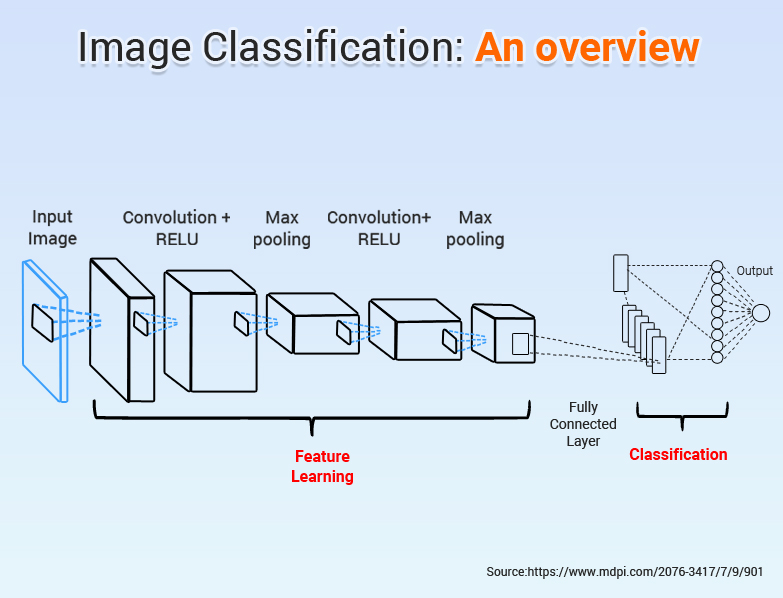

There are four main types of CNN layers, Convolution layer, ReLu layer, Pooling layer, and fully-connected layer.

- Convolution Layer: This layer has a set of learnable filters which have a small receptive range but can be applied to the full-dept of input provided. Convolution layers are in the highest number in a CNN.

- ReLu Layer: Rectified Linear Unit (ReLu) layers are activation functions applied to reduce overfitting, and improve accuracy and efficiency of the CNN. CNNs that have ReLu layers are easier to train and produce better results.

- Pooling Layer: A pooling layer gathers the output of all neurons in the layer preceding it and processes this data. The main job of a pooling layer is to reduce the number of factors being considered and produce streamlined output. This output brings the CNN closer to making an accurate prediction or observation.

- Fully-Connected Layer: A fully connected layer is the final output layer for CNN which flattens input received from preceding layers and provides the result.

In general, a CNN architecture closely resembles the architecture shown below:

Importance of Image Classification for Your Business

From Facebook’s face recognition programs to Google’s Google Lens services, computer vision and image processing industry is making full use of CNNs in image processing. An excellent example of using AI to solve a business problem is BeArty’s solution that AISmartz recently developed to help BeArty with automatic product categorization and boost the web traffic and the revenue thereof.

Conclusion

Image analysis and computer vision experts agree that using AI, specifically CNNs, in image classification, is a revolutionary step forward. Since CNNs are self-learning models, their efficiency only increases as they get trained on more data. It is high time for you to implement your CNN based image classification system if your business has a dependency on image analysis and classification.

1-888-661-8967

1-888-661-8967